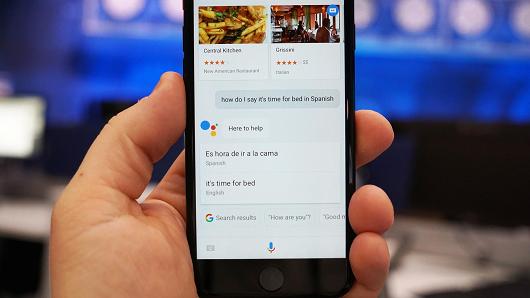

Well, human-AI relation needs to improve. Amazon’s Alexa personal assistant is operating in one of the world’s largest online stores and deserves accolade as it pulls out information from Wikipedia. But what if it can’t play that rad pop banger you just heard and responds saying “I’m sorry, I don’t understand the question,”!! Disappointing, right?

All revered digital helpmates including Google’s Google Assistant and Apple’s Siri are capable of producing frustrating coups that can feel like artificial stupidity. Against this, Google has decided to start a new research push to realize and improve the existing relations between humans and AI. PAIR, for People + AI Research initiative was announced this Monday, and it would be shepherded by two data viz crackerjacks, Fernanda Viégas and Martin Wattenberg.

Get Machine Learning Certification today. DexLab Analytics is here to provide encompassing Machine Learning courses.

Virtual assistants don’t like to be defeated – they get infuriated when they fail to perform a given task. In this context, Viégas says she is keen to study how people outline expectations regarding what systems can and cannot outperform a command – which is to say how virtual assistants should be designed to prick us toward only asking things that it can perform, leaving no room for disappointment.

Making Artificial Intelligence more transparent among people and not just professionals is going to be a major initiative of PAIR. It also released two open source tools to help data scientists grasp the data they are feeding into the Machine Learning systems. Interesting, isn’t it?

The deep learning programs that have recently gained a lot of appreciation in analyzing our personal data or diagnosing life-threatening diseases is of late said to be dubbed as ‘black boxes’ by polemicist researchers, meaning it can be trickier to observe why a system churn out a specific decision, like a diagnosis. So, here lies the problem. In life and death situations inside clinics, or on-road, while driving autonomous vehicles, these faulty algorithms may pose potent risks. Viégas says “The doctor needs to have some sense of what’s happening and why they got a recommendation or prediction.”

Google’s project comes at a time when the human consequences of AI are being questioned the most. Recently, the Ethics and Governance of Artificial Intelligence Fund in association with the Knight Foundation and LinkedIn cofounder Reid Hoffman declared $7.6 million in grants to civil society organizations to review the changes AI is going to cause in labor markets and criminal justice structures. Similarly, Google announces most of PAIR’s work will take place in the open. MIT and Harvard professors Hal Abelson and Brendan Meade are going to join forces with PAIR to study how AI can improve education and science.

Closing Thoughts – If PAIR can integrate AI seamlessly into prime industries, like healthcare, it would definitely shape roads for new customers to reach Google’s AI-centric cloud business destination. Viégas reveals she will also like to work closely with Google’s product teams, like the ones responsible for developing Google Assistant. According to her, such collaborations are great and comes with an added advantage, as it keeps people hooked to the product, resulting in broader company services. PAIR is a necessary shot to not only help push the society to understand what’s going on between humans and AI but also to boost Google’s bottom line.

DexLab Analytics is your gateway to great career in data analytics. Enroll in a Machine Learning course online and ride on.

Interested in a career in Data Analyst?

To learn more about Data Analyst with Advanced excel course – Enrol Now.

To learn more about Data Analyst with R Course – Enrol Now.

To learn more about Big Data Course – Enrol Now.To learn more about Machine Learning Using Python and Spark – Enrol Now.

To learn more about Data Analyst with SAS Course – Enrol Now.

To learn more about Data Analyst with Apache Spark Course – Enrol Now.

To learn more about Data Analyst with Market Risk Analytics and Modelling Course – Enrol Now.

Comments are closed here.